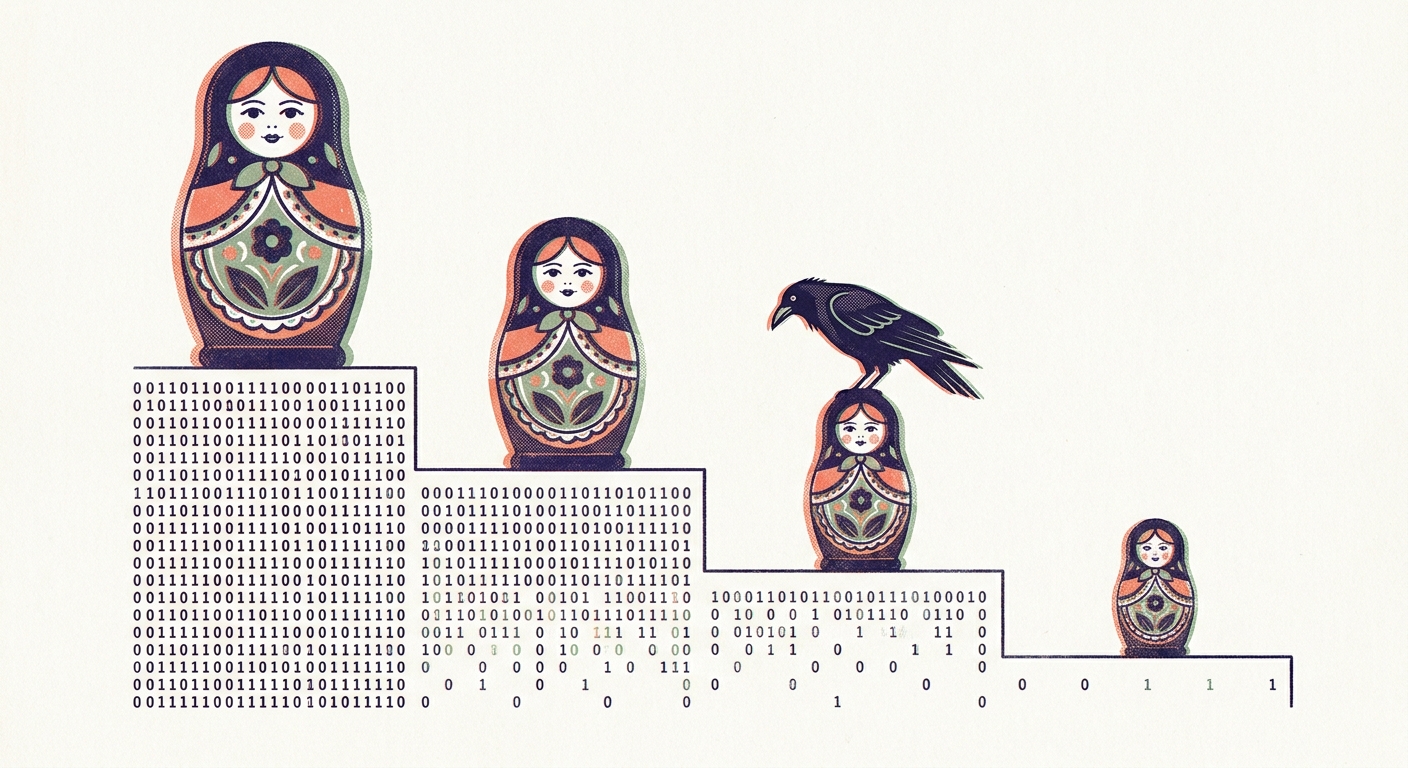

When Matryoshka Does Buy You Sign-Bit Compression

Matryoshka Doesn’t Buy You Sign-Bit Compression went hard on Gemini’s 3072-d Matryoshka embeddings: post-hoc dimension selection — prefix, suffix, spaced, random — collapsed to within ±0.018 R@100 once you binarized. The Matryoshka training was a property of float32 space; sign-packing washed it out.

Jina’s jina-embeddings-v5 (April 2026) is built differently. Matryoshka training and a Global Orthogonal Regularizer (GOR) that pushes embeddings toward uniform-on-sphere — both with binary quantization explicitly in mind. The Table 6 ablation in §5.3.4 reports −0.019 nDCG@10 going BF16 to binary on the MTEB Retrieval subset, end-to-end trained. I wanted to know what zero-training centered SimHash does to those same embeddings.

The headline

BEIR/SciFact, 300 queries, 5183 documents, jina-v5-nano with the retrieval adapter, 768-d full precision:

| nDCG@10 | Δ vs fp32 | |

|---|---|---|

| fp32 baseline | 0.758 | — |

| centered SimHash 1-bit (zero training) | 0.730 | −0.028 |

Jina’s published binary number on the MTEB Retrieval subset is −0.019. Mine on BEIR/SciFact is −0.028. Different benchmarks, so the 0.009 gap sits in the noise. Inference-time corpus-mean centering plus sign-packing captures essentially what end-to-end GOR training does for binary quantization — on a model the authors did not design with remax in mind. 96 bytes per document at 0.730 recall is a 32× storage reduction from full fp32, with no fine-tuning.

The compound frontier

Matryoshka truncation gives a dimension knob. remax’s stacked SimHash ladder gives a precision knob — k=1 is plain SimHash, k=2 stacks two independent rotations, k=4 stacks four, and so on. Variance shrinks roughly as 1/k while every step stays rank-correct. Crossing the two knobs on jina-v5-nano gives a 6×5 operating-space grid:

nDCG@10 across embedding dimension (rows) and precision tier (columns). Darker indigo = higher recall. The 768d × 1-bit cell is outlined in coral — it’s the Pareto elbow.

That grid contains 24 quantized operating points. Most of them are dominated — you can get the same recall for fewer bytes somewhere else in the table. The points that aren’t dominated trace a clean Pareto curve with a structural inflection at 96 bytes per document:

Operating points on log-bytes vs nDCG@10. Coral = Pareto frontier; faded indigo = dominated points; sage dashed line = fp32 baseline. The 96 B/doc elbow (circled) marks where the curve transitions from steep to nearly flat.

The shape is doing real work here. Below the elbow, adding dimensions buys you more recall than adding stacked bits: at 64 B/doc, 512d × 1-bit (0.703) beats 128d × k=4 (0.653) by seven percentage points. Above the elbow, the reverse: at 192 B/doc, 768d × k=2 (0.734) edges past anything you can build by truncating and re-stacking. Spend bytes on dimensions until you have all of them, then spend on bits. The model’s Matryoshka training gives the first half of that rule its teeth; remax’s ladder gives the second half its.

The paper publishes truncation in §5.4 and binary quantization in Table 6, but not the product. That’s a curiosity rather than a critique — the paper is about model quality, not retrieval-system design, and Jina did the genuinely hard work of training both knobs in. The combined picture is worth charting because that’s where most self-hosters actually live.

The floor

At 32 dimensions, 1-bit collapses (−0.307 from fp32). Stacking helps — k=8 recovers to 0.421 — but cannot recreate missing dimensions. Below the rank-recovery threshold, dim count dominates bit budget, and the frontier hits a floor you cross earlier than you’d expect — somewhere between 32 and 128 dimensions on this corpus. The top-left corner of the heatmap is the visible evidence: a pale square where nothing remax does will save you.

If you’re tempted by 1536× compression numbers from somebody’s marketing slide, run the floor test first.

What this means

For practitioners running large in-memory retrieval indices:

- If you’re using jina-v5 or embeddinggemma — and you probably should; both are state of the art at their size class — a 1-bit first stage is right there waiting. Encode with the retrieval adapter, center on your corpus mean, sign-pack, scan with Hamming. Use float32 for stage 2 reranking. The 96 B/doc operating point gets you 32× compression at −0.028 recall, no additional training required. For 100 million documents that’s 9.6 GB of binary codes — comfortable in RAM on a midrange server, instead of 300 GB of fp32 that wants a beefy box and a thoughtful retrieval engine.

- If you’re not on a GOR-trained encoder — SPECTER2’s published artifacts, an older corpus, an in-house model — the same recipe still applies, and the centering step does more work. remax bundles the recipe and adds the stacked-ladder knob so you can dial in a precision tier between 1 bit and float depending on your byte budget and your tolerance for recall loss.

The earlier post was about post-hoc Matryoshka — selecting dimensions after training. When Matryoshka is trained-in and paired with GOR, you get a frontier instead of a flat line: a clean Pareto curve with a 96 B/doc elbow, an honest floor below 64 dimensions, and a zero-training recipe that closes most of the gap to end-to-end training on its own. The architecture decision — how much to spend on dimensions versus bits — falls out of the curve’s shape rather than out of guesswork.

Full experiment: remax PR #44. Prior posts in the series: One Bit Beats Two, Embedding Compression Is Mostly Centering, Three Gigs to Search a Hundred Million Papers, Matryoshka Doesn’t Buy You Sign-Bit Compression.