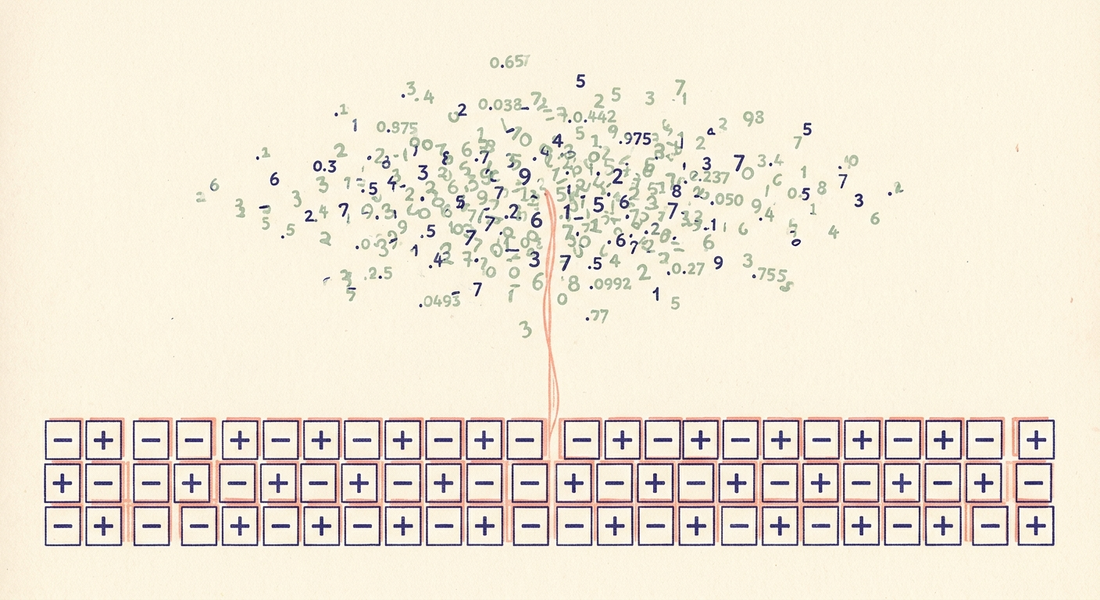

Your Embedding Has a Free Coarse Index In It

Companion to One Bit Beats Two, which made the case that 1-bit Matryoshka extraction beats 2-bit and 3-bit at retrieval rank. That argument leaned on remex, the codebook, the Haar rotation, the math.

This post is the operational follow-up. You don't need any of that. If your dense embeddings have a bounded mean and roughly isotropic post-centering distribution — which describes most modern encoders — the coarse index is already inside them. Two lines of numpy reach in and pull it out:

mu = corpus.mean(0)

codes = np.packbits((corpus - mu) > 0, axis=1)

32× storage reduction at the index layer. R@100 = 0.988 against float32 inner-product ground truth on SPECTER2. Encode time for 10,000 768-d vectors: 9 milliseconds. No library, no training, no codebook.

The numbers

Same setup as the companion: 10,000 broad-NLP SPECTER2 papers, 100 held-out queries, ground truth is top-10 by raw inner product.

N R@N corpus scanned

10 0.620 0.1%

50 0.964 0.5%

100 0.988 1.0%

200 0.997 2.0%

500 1.000 5.1%

Read the middle row: scan 1% of the corpus via Hamming distance on packed bits, recover 98.8% of the true top-10. That's the operating point for a two-stage retriever. Stage 1 trims 10,000 to 100 in a single SIMD-friendly XOR-and-popcount sweep over a 950 KB array; Stage 2 reranks those 100 with the float32 vectors you already have. End-to-end recall lands at or near 1.0.

Centering matters more than rotation

The full remex pipeline — Haar rotation plus centering plus sign — gets R@10 = 0.635 on the same data. Bare sign(x - mu) gets 0.620. The rotation buys 0.015 R@10. The centering buys 0.076.

sign(x), no centering: 0.544

sign(x - mu), centered: 0.620

sign(x - mu) + rotation: 0.635

The reason centering does the heavy lifting is mechanical. SPECTER2 has dimensions whose mean is far from zero — one is at 15.5, with corpus L2 norms in the 20–22 range. On those dimensions sign(x) is constant: they contribute zero bits to a Hamming distance while still occupying slots in the signature. Subtracting the corpus mean reclaims that budget. Rotation cleans up the residual dim-correlation tax, but it's a smaller effect.

A proper Lloyd-Max codebook handles all of this, which is why remex's 1-bit numbers are a hair better. But if all you want is a Stage-1 coarse pass, you can skip the codebook and ship sign(x - mu) today.

SimHash, basically

This isn't novel. Project a vector onto a direction, take the sign — that's a single bit of Charikar SimHash, locality-sensitive for cosine similarity, published in 2002. The recipe above is SimHash with the projection axes hard-coded as the standard basis after centering — which works as well as a random rotation when the centered data is roughly isotropic, as it is for most modern dense encoders. Two caveats: SimHash estimates cosine, so on embeddings whose L2 norms vary widely you'll want to L2-normalize before encoding — SPECTER2's norms cluster in 20–22, tight enough that cosine and inner-product rankings nearly coincide here. And on pathologically anisotropic encoders, the Haar rotation that remex provides earns its keep.

The framing matters more than the novelty. The default move when reaching for a Stage-1 is to install FAISS or ScaNN, fit a codebook, manage a rebuild step. If your retrieval traffic doesn't yet justify that overhead, two lines of numpy put you at 98.8% recall on 1% of the corpus.

Reproducer

import numpy as np

X = np.load("specter2_nlp_broad.npy") # (10000, 768) float32

rng = np.random.default_rng(99)

perm = rng.permutation(X.shape[0])

queries, corpus = X[perm[:100]], X[perm[100:]]

truth = np.argsort(-(queries @ corpus.T), axis=1)[:, :10]

mu = corpus.mean(0)

codes = np.packbits((corpus - mu) > 0, axis=1)

q_codes = np.packbits((queries - mu) > 0, axis=1)

LUT = np.array([bin(i).count('1') for i in range(256)], dtype=np.uint16)

def topN(q, codes, N):

d = LUT[np.bitwise_xor(codes, q)].sum(1)

idx = np.argpartition(d, N)[:N]

return idx[np.argsort(d[idx])]

for N in [10, 50, 100, 500]:

pred = np.array([topN(q_codes[i], codes, N) for i in range(100)])

hits = sum(len(set(pred[i]) & set(truth[i])) for i in range(100))

print(f"R@{N:>4d} = {hits / 1000:.3f}")

Reproduces the table byte-for-byte. The matched-bit-budget crossover against full remex came out of oaustegard/remax PR #10, which is also where this observation got pulled out as a separate result.

If you've got dense embeddings sitting in a database and you've been meaning to get to retrieval, you already have a Stage-1. It costs nothing to try.